The recent advent of

generative Artificial Intelligence* (genAI) has already revolutionized our lives and societies, by means of tools like Chat-GPT or Gemini. For now, genAI is essentially used to generate multimedia data (text editing, summarization, photoshopping, video generation, etc.). However, in the near future, genAI capabilities will be extended to more technical data generation, as produced in academic research labs. This will have unprecedented consequences in scientific knowledge production that must be anticipated–notably because genAI can

hallucinate*.

In the complex landscape of molecular biology, tiny hallucinated details could go unnoticed in large datasets, leading to erroneous conclusions (e.g. a fantasized biomarker) with devastating consequences, from scientific literature corruption to wasted clinical trials. However, prohibiting genAI in scientific research would deprive research and medical communities of powerful tools. To cope for this dilemma, researchers from Irig proposed to list various uses-cases where genAI can be safely used thanks to a well-chosen risk-mitigation policy. Their article presents a tenth of use-cases, and classify them in three categories: hypothesis generation, data generation and computational biology software improvement.

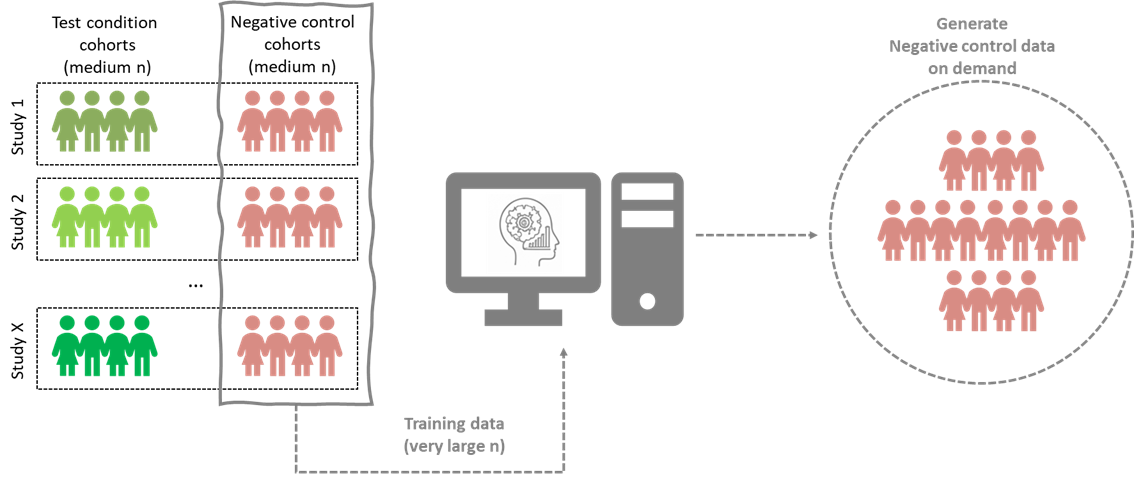

Figure: Exemplified illustration of one of the proposed use-cases.

Completing a cohort by generating data about additional patients in the disease group (in

green), would be highly risky—as any undetected hallucination would lead to a biased representation of the disease. Opposedly, complementing the healthy group (in

red) which serves as a control in the study can be compliant with a risk-mitigation policy: First, because undetected hallucinations here would yield to an increased diversity within the control group, which is known as an efficient way to limit false discovery risks. Second, because healthy patients have admittedly been more frequently incorporated in cohort studies, so that the potentially available data to train the genAI tool are larger, more robust and more consistent. This example illustrates how a given genAI algorithm suited to a given task can be used in different ways, with different exposure to hallucination-induced risks.

Though not exhaustive, these uses-cases provide a first basis for the correct integration of genAI into the scientific approach, as it incentivizes researchers to adopt a critical eye on its use.

*Generative Artificial Intelligence refers to algorithms that are not only able to analyse data and make decision or prediction, like classical Artificial Intelligence (AI) tools, but which can also generate new data.

*Hallucinations occur when a genAI tool answer to a request (also known as prompt) by generating details that seem plausible with some respects, but which are either wrong (e.g. a reference to a non-existing article), or impossible according to real-world constraints that are ignored by the genAI model (e.g. US President Abraham Lincoln commenting about the internet, as in heading figure).

BGE is a Joint Research Unit (UMR): UGA, CEA, CNRS and INSERM

Fundings: The author’s research was supported by grants from the French National Research Agency: ProFI project (ANR-10INBS-0008), GRAL CBH project (ANR-17-EURE-0003), France 2030 program (ANR-19-P3IA-0003), PeptidOMS project (ANR-24-CE45-3296), and ProteoVir project (ANR-24-RRII-0001).